In the world of Linux and Unix, repetitive chores are a fact of life. If you are just starting out, you might find yourself spending hours copying files, parsing logs, or cleaning up old data. The good news is that you can lift most of that burden with shell scripts. Bash and friends are powerful enough to automate many daily tasks, yet approachable enough for beginners to grasp with a little guided practice. This article walks you through practical, beginner friendly automation workflows. You will learn how to pick tasks to automate, write safe scripts, and schedule them so they run reliably in the background.

No Ack dot org is a tech blog for coders covering Python, databases, Linux and web development. Our aim with this guide is to present clear, actionable steps so you can start automating tasks today. You will find practical examples, step by step instructions, and tips that help you grow from a beginner into a confident shell scripter.

Prerequisites

Before you start writing shell scripts, there are a few basics to get in place. Think of these as the foundation that makes automation possible and reliable.

- A Linux or Unix like environment: Most commonly a Linux distribution or a macOS system with the bash shell. Windows users can use Windows Subsystem for Linux (WSL) or a virtual machine.

- Basic command line familiarity: You should be comfortable with navigating directories, listing files, and running common commands like ls, cd, cat, and echo.

- A text editor: Any editor you like for editing scripts. Examples include nano, vim, code, or even a simple GUI editor.

- The Bash shell: Most scripts on Linux are written for Bash. You should know how to run a script and make it executable.

- Safe permissions and testing mindset: Automating tasks can affect files and system state. Start with dry runs and non destructive commands to confirm behavior.

- A simple script directory: Create a dedicated workspace such as ~/scripts where you keep your automation projects.

If you are new to any of these items, take a little time to practice small commands in a test directory. The goal is to build confidence so you can move on to more ambitious automation tasks without risking important data.

Task Selection

Not every task should be automated. A practical approach is to automate what you do most often, what is repetitive, and what benefits from consistent behavior. Here is a simple framework to choose your first automation projects.

- Frequency: Tasks you perform daily or weekly are prime candidates.

- Predictability: Tasks with consistent inputs and outputs are easier to automate reliably.

- Safety: Start with non destructive tasks or things you can easily roll back.

- Observability: Choose tasks where you can verify success through a clear output or log.

Begin with a single project and iterate. As you gain confidence, you can assemble a small toolkit of scripts that work together. The aim is to automate boring parts so you can focus on high impact work like writing code, analyzing data, or building features.

A common progression for beginners is:

– Task 1: Automating File Backups

– Task 2: Automating Data Processing

– Task 3: Automating Log Analysis

– Task 4: Automating System Maintenance

– Task 5: Automating Local Application Deployment with Docker

Each task below includes a concepts covered section and a step by step guide you can adapt to your environment.

Task 1: Automating File Backup

Backing up files regularly is essential but easy to forget. A shell script can perform incremental backups, compress files, and keep old backups for a defined period.

Concepts Covered

- Why backups matter and how shell scripts can help

- Using rsync for efficient backups

- Creating incremental backups with hard links or archive files

- Excluding unnecessary files to save space

- Dry runs to test scripts safely

- Rotating old backups to keep storage in check

Step-by-Step Guide

1) Define backups goals

– Determine source directories you want to back up, for example: /home/you/projects and /etc.

– Decide a destination for backups, such as /mnt/backup/your machine or a dedicated backup server.

– Pick a backup format, for example a dated directory or compressed tar archive.

2) Create the backup script

– Open your editor and create a file named backup.sh with executable permissions.

– A simple approach uses rsync to mirror files to a backup destination:

– rsync -av –delete /home/you/projects /home/you/backup/

– The –delete flag ensures that deleted files are removed from the backup to reflect the source.

– Run a dry run first to verify what will be copied:

– rsync -avn –delete /home/you/projects /home/you/backup/

– Add exclusions for noise such as caches and temporary files:

– –exclude ‘/.cache/‘ –exclude ‘*.tmp’

3) Make the script executable

– Run: chmod +x backup.sh

4) Test the script

– Execute: ./backup.sh

– Review the output to ensure files are being selected correctly and nothing unwanted is copied.

5) Rotate backups

– If you want dated backups, you can create a timestamp and place backups in a dated folder:

– DEST=”/mnt/backup/$(date +%F)”

– rsync -av –delete /home/you/projects “$DEST”

– You can keep the last 7 backups by removing older directories manually or with a small script:

– find /mnt/backup -maxdepth 1 -type d -name ‘????-??-??’ -mtime +7 -exec rm -rf {} \;

6) Schedule the backup

– Use cron to run nightly at 2 AM:

– 0 2 * * * /path/to/backup.sh

– If you use a system with systemd, you can create a timer unit that triggers the script.

7) Safety tips

– Always maintain at least one offsite copy if possible.

– Use a dry run during testing to avoid surprises.

– Log activities so you can audit what happened.

A practical backup script could be extended to backup multiple sources, compress the archive by creating a tar.gz file, and email you a summary after the run. The key is to start simple and add features gradually as you gain confidence.

Task 2: Automating Data Processing

Automating data processing is great for cleaning, transforming, or aggregating data from logs, CSVs, or other sources. A shell scripting approach can chain commands like awk, sed, cut, and sort to produce a cleaned dataset or a quick summary.

Concepts Covered

- Data pipelines in Bash

- Text processing with awk, sed, cut, and grep

- Field selection and simple aggregation

- Redirecting and saving outputs

- Simple error handling and exit codes

Step-by-Step Guide

1) Define a simple data processing goal

– Example: generate a daily summary from a CSV file containing sales data with fields like date, product, quantity, and price.

2) Write a minimal processing script

– Create a file named process_csv.sh

– Use awk to extract columns and compute a total:

– awk -F, ‘{sum += $4 * $5} END {print “Total revenue: ” sum}’ sales.csv

– If the CSV has a header row, skip it:

– awk -F, ‘NR>1 {sum += $4 * $5} END {print “Total revenue: ” sum}’

– You can also group by product:

– awk -F, ‘NR>1 {sales[$2] += $4} END {for (p in sales) print p, sales[p]}’ sales.csv

3) Add error handling and logging

– Create a log file: echo “$(date) processing started” >> process.log

– Check if the input file exists and is readable:

– if [[ -f “sales.csv” && -r “sales.csv” ]]; then … else echo “File missing” >> process.log; exit 1; fi

4) Save outputs to a report

– Redirect results to a report file:

– awk -F, ‘…’ sales.csv > daily_report.txt

5) Automate with a schedule

– Run daily after business hours using cron:

– 30 23 * * * /path/to/process_csv.sh >> /var/log/process_csv.log 2>&1

6) Extend with more complex pipelines

– Combine multiple steps: filter invalid lines, convert units, or join with other data files.

– You can integrate Python or R for heavier data work, but Bash can handle preprocessing and orchestration well.

A simple processing script demonstrates how you can turn raw data into meaningful summaries with minimal friction. As you grow more comfortable, you can modularize the script into functions and add configuration options to support different data sources.

Task 3: Automating Log Analysis

Log analysis helps you monitor system health, detect anomalies, and understand usage patterns. A shell based approach can parse log files, extract relevant fields, and produce a concise summary.

Concepts Covered

- Log parsing basics

- Pattern matching with grep

- Field extraction with awk

- Summaries and alert thresholds

- Rotating and archiving old logs

Step-by-Step Guide

1) Choose the logs to analyze

– Common options are /var/log/syslog, /var/log/auth.log, or application specific logs.

2) Create a script to scan for interesting events

– A simple example collects the number of failed login attempts:

– grep -i “failed password” /var/log/auth.log | wc -l

– You can count unique users who attempted logins:

– grep -i “Failed password” /var/log/auth.log | awk ‘{print $11}’ | sort | uniq -c | sort -nr

3) Generate a readable report

– Create a script that aggregates a few metrics, writes a summary, and saves it to a file:

– echo “Report for $(date)” > log_report.txt

– echo “Failed logins: $(grep -i ‘failed password’ /var/log/auth.log | wc -l)” >> log_report.txt

4) Schedule regular analysis

– Use cron to run the analysis nightly and email the result or store it in a central location:

– 0 3 * * * /path/to/log_analysis.sh >> /var/log/log_analysis.log 2>&1

5) Add alerting

– If a threshold is exceeded, trigger a notification:

– if [ “$(grep -i ‘failed password’ /var/log/auth.log | wc -l)” -gt 50 ]; then mail -s “Security alert” you@example.com < log_report.txt; fi

6) Rotate and archive

– Move old reports into an archive directory and compress if needed:

– mkdir -p /var/log/archives; mv log_report.txt /var/log/archives/log_$(date +%F).txt

A well crafted log analysis script gives you quick visibility into what is happening on your system. You can expand it to parse application logs, detect unusual spikes, or track resource usage patterns.

Task 4: Automating System Maintenance

Keeping a system healthy requires regular maintenance like cleanup, updates, and monitoring disk space. A shell script can run routine checks, perform safe cleanups, and notify you about key metrics.

Concepts Covered

- System hygiene with cleanup tasks

- Disk usage monitoring

- Package management and safe cleanup

- Idempotent operations

- Basic alerting

Step-by-Step Guide

1) Check disk usage

– A script that reports disk usage and warns when usage crosses a threshold:

– df -H | awk ‘$5+0 >= 80 {print $1″: “$5″ used”}’

– You can define a threshold variable and use it to trigger actions.

2) Clean package cache safely

– On Debian based systems:

– sudo apt-get clean

– sudo apt-get autoclean

– Remove unused packages:

– sudo apt-get autoremove -y

– Integrate into a script with safety checks:

– if [ “$(df / | awk ‘NR==2 {print $5+0}’)” -ge 85 ]; then sudo apt-get clean; sudo apt-get autoremove -y; fi

3) Rotate and compress logs

– Find large log files and rotate or compress them:

– find /var/log -type f -name “*.log” -size +1M -exec gzip {} \;

4) Verify critical services

– Check if essential services are running and restart if needed:

– systemctl is-active –quiet sshd || systemctl restart sshd

5) Schedule regular maintenance

– Cron entry to run weekly on Sunday at 2 AM:

– 0 2 * * 0 /path/to/system_maintenance.sh

6) Observability and reporting

– Append a short summary to a log file to prove what actions were taken:

– echo “$(date): ran maintenance, disk usage below threshold” >> /var/log/maintenance.log

A maintenance script helps you keep a clean and healthy system with predictable behavior. The key is to implement actions that are safe to run repeatedly and provide meaningful feedback when something goes wrong.

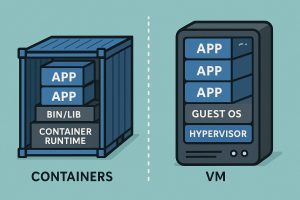

Task 5: Automating Local Application Deployment with Docker

If you develop software locally, automating the build and deployment of a containerized application can save time and reduce environment drift. A shell script can orchestrate building a Docker image, starting containers, and running migrations or seed data.

Concepts Covered

- Lightweight application deployment using Docker

- Building images, containers, and docker run options

- Compose friendly workflows

- Idempotent deployment steps

- Local environment parity with production

Step-by-Step Guide

1) Define the deployment goals

– Build a Docker image from a Dockerfile in your project.

– Start a container with the necessary ports and volumes.

– Run any required migrations or seed data.

2) Create a deployment script

– Name it deploy.sh and make it executable.

– Sample steps:

– docker compose -f docker-compose.dev.yml up –build -d

– If your project uses a database or migrations, run a script inside the container or in the host as needed.

– Example:

– docker exec -i app_backend python manage.py migrate

– If you are not using Compose, you can run:

– docker build -t myapp:dev .

– docker run -d -p 8000:8000 –name myapp myapp:dev

3) Add environment awareness

– Use a .env file or export variables for local development:

– APP_ENV=development

– DB_HOST=localhost

– In your script, source the env file if available:

– if [ -f .env ]; then export $(cat .env | xargs); fi

4) Make the deployment repeatable

– Put critical steps behind functions and ensure the script can be re-run safely.

– Use docker-compose for multi service applications to simplify orchestration.

5) Test and verify

– After starting, check running containers:

– docker ps

– Confirm that the application is reachable at its port and that migrations ran correctly.

6) Cleanup in development

– Implement a cleanup step to stop and remove containers when you are done:

– docker compose -f docker-compose.dev.yml down

Automating local Docker based deployment reduces friction when you are iterating on new features. It also helps you replicate a similar environment on your colleagues machines or in CI.

Getting Started with Scheduling and Testing

Automation is powerful only when it runs on a schedule or on demand without constant manual intervention. Two common approaches in Linux environments are cron jobs and systemd timers.

- Cron

- Simple and reliable for time based tasks.

- Edit crontab with crontab -e and add lines like: 0 2 * * * /path/to/script.sh

- Pros: easy, widely supported.

-

Cons: not aware of system state, lacks heavy logging by default.

-

Systemd timers

- More modern and integrated with the systemd manager.

- Timers pair with service units and can be more robust in complex environments.

- Pros: better logging, dependencies, and failure handling.

- Cons: more setup, requires systemd aware scripting.

Testing tips for scheduling:

– Always test with a dry run or echo statements before changing files or state.

– Redirect output to a log file: /path/to/script.sh >> /var/log/myscript.log 2>&1

– Use a test environment when possible to avoid affecting production data.

For Windows users or those on WSL, you can still leverage cron or systemd depending on your setup. The core idea is to define the job, test it, and verify results are being recorded and acted upon.

Best Practices for Shell Scripting

To help your automation stay reliable and maintainable as your project grows, keep these practices in mind:

- Start small and iterate: build a minimal working script and add features gradually.

- Use clear, descriptive names: name scripts and variables clearly so others understand their purpose.

- Idempotence: ensure a script can run multiple times without causing unintended side effects.

- Fail fast: validate inputs and exit early if something is wrong.

- Use logging: write meaningful messages to a log so you can audit what happened.

- Error handling: check exit codes and handle failures gracefully.

- Environment awareness: do not assume certain paths or user permissions; check for required tools before running.

- Security: avoid exposing secrets in scripts; prefer environment variables or secure vaults.

- Documentation: include a short usage section at the top of the script to explain what it does and how to run it.

- Portability: write scripts that work across common shells or clearly indicate the required shell.

Troubleshooting Common Issues

As you begin to automate tasks, you may encounter common issues. Here are quick tips to resolve them:

- Command not found: Ensure required tools are installed (for example rsync, awk, grep) and on PATH.

- Permission denied: Make scripts executable (chmod +x) and ensure the script has the necessary permissions to read or write files.

- Path issues: Use absolute paths or carefully manage the working directory inside the script.

- Non zero exit codes: Check the last error, capture it, and handle it gracefully rather than letting the script fail silently.

- Log file growth: When logging, rotate logs or use a logging framework to prevent disk space exhaustion.

- Scheduling failures: Confirm the cron or systemd timer is enabled and that the script path is correct. Check system logs for scheduling issues.

Conclusion

Automating tasks with shell scripts is a practical and empowering skill for any Linux and Unix practitioner. By starting with foundational prerequisites, choosing safe, repetitive tasks, and following a disciplined approach to testing and scheduling, you can shave hours off your week and reduce the chance of human error. The five tasks covered in this guide — file backups, data processing, log analysis, system maintenance, and local Docker deployment — provide a solid, real world launching pad for your automation journey.

Remember the guiding principles:

– Start small and build confidence with simple, safe tasks.

– Emphasize idempotence and safe defaults.

– Test thoroughly with dry runs and logging.

– Schedule tasks where they fit naturally, and monitor results to learn and improve.

As you gain experience, you can expand your toolkit with more sophisticated patterns, such as modular scripts, libraries for parsing data, or integrating with CI pipelines. The goal is not to write perfect scripts on day one but to cultivate a reliable automation habit that makes your work faster, repeatable, and less error prone. No Ack dot org is excited to see what you build next with shell scripting. Keep experimenting, stay curious, and welcome to the world of automation.